Originally written 14 May 1993 for the Extropians mailing list, and re-published with 2001 at the urging of a list member, with light editing by Melinda Green. Reformatted and with minor fixes December 2005.

Abstract:

This essay discusses the best current understanding of the relationship between mathematical and empirical knowledge. It focuses on two questions:

- Does mathematics have some sort of deep metaphysical connection with reality, and

- if not, why is it that mathematical abstractions seem so often to be so powerfully predictive in the real world?

Mathematics is the model of a-priori knowledge in the Aristotelian tradition of rationalism. Among the Greeks, geometry was regarded as the highest form of knowledge, a potent key to the metaphysical mysteries of the Universe. This is a rather mystical belief, and the connection to mysticism and religion was made explicit in cults like the Pythagorean. No culture since has semi-deified a man for discovering a geometrical theorem!

The Greek awe of mathematical knowledge is still with us; it's behind the traditional metaphor of mathematics as "Queen of the Sciences". It's been reinforced by the spectacular successes of mathematical models in science, successes the Greeks (lacking even simple algebra) could never have foreseen. Since Isaac Newton's discovery of calculus and the inverse-square law of gravity in the late 1600s, phenomenal science and higher mathematics have been closely symbiotic -- so much so, that the existence of a predictive mathematical formalism has become the hallmark of a "hard science".

For two centuries after Newton, phenomenal science aspired to the kind of rigor and purity that seemed to be embodied in mathematics. The metaphysical situation seemed simple; mathematics embodied perfect a-priori knowledge, those sciences able to most mathematicize themselves were the most successful at phenomenal prediction; perfect knowledge would therefore consist of a mathematical formalism, arrived at by science and embracing all of reality, that would ground a-posteriori empirical understanding in a-priori rational logic. It was in this spirit that Condorcet dared to imagine describing the entire universe as a mutually-solving set of partial differential equations.

The first cracks in this inspiring picture appeared in the latter half of the 19th century when Riemann and Lobachevsky independently proved that Euclid's Axiom of Parallels could be replaced by alternatives which yielded consistent geometries. Riemann's geometry was modeled on a sphere, Lobachevsky's on a hyperboloid of rotation.

The impact of this discovery has been obscured by later and greater upheavals, but at the time it broke on the intellectual world like a thunderbolt. For the existence of mutually inconsistent axiom systems for geometry, any of which could be modeled in the phenomenal universe, called the whole relationship between mathematics and physical theory into question.

When there was only Euclid, there was only one possible geometry. One could believe that the Euclidean axioms constituted a kind of perfect a-priori knowledge about geometry in the phenomenal world. But suddenly we had three geometries, an embarrassment of metaphysical riches.

For how were we to choose between the axioms of plane, spherical, and hyperbolic geometry as a description of "real" geometry? Because all three are consistent, we couldn't choose on any a-priori basis -- the choice had to become empirical, based on their predictive power for a given situation.

Of course, physical theorists had long been accustomed to choosing formalisms to fit a scientific problem. But it had been widely, if unconsciously, assumed that the need to do so ad hoc was a function of human ignorance; that, given good enough mathematics and logic, we could deduce the correct choice from first principles, producing a-priori descriptions of reality to be confirmed, as an afterthought, by empirical check.

But now, the Euclidean geometry that had been considered the model for axiomatic perfection in mathematics for over two thousand years, had been dethroned. If one could not know something as fundamental as the geometry of space a-priori, what hope was there for a purely "rational" theory encompassing all of nature? Psychologically, Riemann/Lobachevsky struck at the very heart of the enterprise of mathematics as it was then conceived.

Furthermore, Riemann/Lobachevsky called the nature of mathematical intuition into question. It had been easy to believe implicitly that mathematical intuition was a form of perception -- a glimpse of the Platonic noumena behind reality. But with two other geometries jostling Euclid, nobody knew for sure what the noumena looked like any more!

Mathematicians responded to this dual problem with an increase in rigor, by trying to apply the axiomatic method throughout mathematics. It was gradually realized that the belief in mathematical intuition as a kind of perception of a noumenal world had encouraged sloppiness; proofs in the pre-axiomatic period often relied on shared intuitions about mathematical "reality" that could no longer be considered automatically valid.

The new thinking in mathematics led to a series of spectacular successes; among these were Cantorian set theory, Frege's axiomatization of number, and eventually Russell & Whitehead's monumental synthesis in Principia Mathematica.

However, it also had a price. The axiomatic method made the connection between mathematics and phenomenal reality narrower and narrower. At the same time, discoveries like the Banach-Tarski Paradox suggested that mathematical axioms that seemed to be consistent with phenomenal experience could lead to dizzying contradictions with that experience.

The majority of mathematicians quickly became "Formalists", holding that pure mathematics could not be philosophically considered more than a sort of elaborate game played with marks on paper (this is the theory behind Robert Heinlein's pithy characterization of mathematics as "a zero-content system"). The old-fashioned "Platonist" belief in the noumenal reality of mathematical objects seemed headed for the dustbin, despite the fact that mathematicians continued to feel like Platonists during the process of mathematical discovery.

Philosophically, then, the axiomatic method lead most mathematicians to abandon previous beliefs in the metaphysical specialness of mathematics. It also created today's split between pure and applied mathematics.

Most of the great mathematicians of the early modern period -- Newton, Liebniz, Fourier, Gauss, and others -- were also phenomenal scientists (i.e. "natural philosophers"). The axiomatic method incubated the modern idea of the pure mathematician as super-esthete, unconcerned with the merely physical. Ironically, Formalism gave pure mathematicians a bad case of Platonic attitude. Applied mathematicians stopped being invited to tea and learned to hang out with physicists.

This brings us to the early 20th century. For the beleaguered minority of Platonists, worse was yet to come.

Cantor, Frege, Russell and Whitehead showed that all of pure mathematics could be built on the single axiomatic foundation of set theory. This suited the Formalists just fine; mathematics coalesced, at least in principle, from a bunch of little disconnected games to one big game. It also made the Platonist minority happy; if there turned out to be one big, over-arching consistent structure behind all of mathematics, the metaphysical specialness of mathematics might yet be rescued.

Unfortunately, it turns out that there is more than one way to axiomatize set theory. In particular, there are at least four major different combinations of assumptions about infinite sets that lead to mutually exclusive set theories (the Axiom of Choice or its negation; the Continuum Hypothesis or its negation).

It was Riemmann/Lobachevsky all over again, but on a much more fundamental level. Riemannian and Lobachevskian geometry could be modeled finitely, in the world; you could decide at least empirically which one fit. Normally, you could regard all three as special cases of the geometry of geodesics on manifolds, thereby fitting them into the superstructure erected on set theory.

But the independent axioms in set theory don't seem to lead to any results that can be modeled in the observable finite world. And there's no way to assert both the Continuum Hypothesis and its negation in one set theory. How's a poor Platonist to choose which system describes "real" mathematics? The victory of the Formalist position seemed complete.

In a negative way, though, a Platonist had the last laugh. Kurt Godel threw a spanner in the Formalist program of axiomatization when he showed that any axiom system powerful enough to include the integers would have to be either inconsistent (yielding contradictions) or incomplete (too weak to decide the truth or falsehood of some assertions in the system).

And that is more or less where things stand today. Mathematicians know that any attempt to put forward mathematics as a-priori knowledge about the universe must fall afoul of numerous paradoxes and impossible choices about what axiom system describes "real" mathematics. They've been reduced to hoping that the standard axiomatizations are not inconsistent but incomplete, and wondering uneasily what contradictions or unprovable theorems are waiting discovery out there, lurking like landmines in the noosphere.

Meanwhile, on the empirical front, mathematics continued to be a spectacular success as a theory-building tool. The great successes of 20th-century physics (general relativity and quantum mechanics) wandered so far from the realm of physical intuition that they could be understood only by meditating deeply on their mathematical formalisms, and following through to their logical conclusions, even when those conclusions seem wildly bizarre.

What irony. Even as mathematical 'perception' came to seem less and less reliable in pure mathematics, it became more and more indispensable in phenomenal science!

Against this background, Einstein's famous quote wondering at the applicability of mathematics to phenomenal science poses an even thornier problem than at first appears.

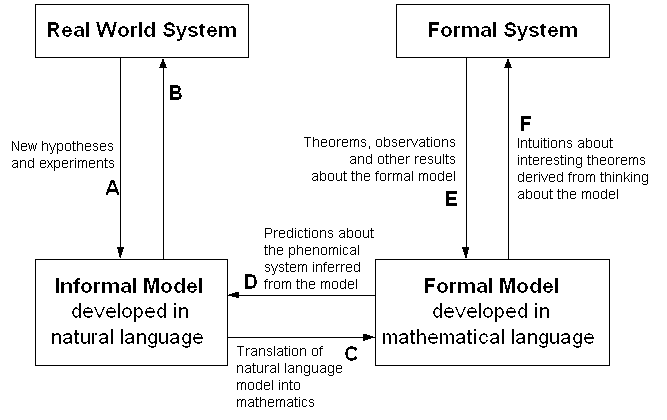

The relationship between mathematical models and phenomenal prediction is complicated, not just in practice but in principle. Much more complicated because, as we now know, there are mutually exclusive ways to axiomatize mathematics! It can be diagrammed as follows (thanks to Jesse Perry for supplying the original of this chart):

The key transactions for our purposes are C and D -- the translations between a predictive model and a mathematical formalism. What mystified Einstein is how often D leads to new insights.

We begin to get some handle on the problem if we phrase it more precisely; that is, "Why does a good choice of C so often yield new knowledge via D?"

The simplest answer is to invert the question and treat it as a definition. A "good choice of C" is one which leads to new predictions. The choice of C is not one that can be made a-priori; one has to choose, empirically, a mapping between real and mathematical objects, then evaluate that mapping by seeing if it predicts well.

For example, the positive integers are a good formalism for counting marbles. We can confidently predict that if we put two marbles in a jar, and then put three marbles in a jar, and then empirically associate the set of two marbles with the mathematical entity 2, and likewise associate the set of three marbles with the mathematical entity 3, and then assume that physical aggregation is modeled by +, then the number of marbles in the jar will correspond to the mathematical entity 5.

The above may seem to be a remarkable amount of pedantry to load on an obvious association, one we normally make without having to think about it. But remember that small children have to learn to count...and consider how the above would fail if we were putting into the jar, not marbles, but lumps of mud or volumes of gas!

One can argue that it only makes sense to marvel at the utility of mathematics if one assumes that C for any phenomenal system is an a-priori given. But we've seen that it is not. A physicist who marvels at the applicability of mathematics has forgotten or ignored the complexity of C; he is really being puzzled at the human ability to choose appropriate mathematical models empirically.

By reformulating the question this way, we've slain half the dragon. Human beings are clever, persistent apes who like to play with ideas. If a mathematical formalism can be found to fit a phenomenal system, some human will eventually find it. And the discovery will come to look "inevitable" because those who tried and failed will generally be forgotten.

But there is a deeper question behind this: why do good choices of mathematical model exist at all? That is, why is there any mathematical formalism for, say, quantum mechanics which is so productive that it actually predicts the discovery of observable new particles?

The way to "answer" this question is by observing that it, too, properly serves as a kind of definition. There are many phenomenal systems for which no such exact predictive formalism has been found, nor for which one seems likely. Poets like to mumble about the human heart, but more mundane examples are available. The weather, or the behavior of any economy larger than village size, for example -- systems so chaotically interdependent that exact prediction is effectively impossible (not just in fact but in principle).

There are many things for which mathematical modeling leads at best to fuzzy, contingent, statistical results and never successfully predicts 'new entities' at all. In fact, such systems are the rule, not the exception. So the proper answer to the question "Why is mathematics is so marvelously applicable to my science?" is simply "Because that's the kind of science you've chosen to study!"